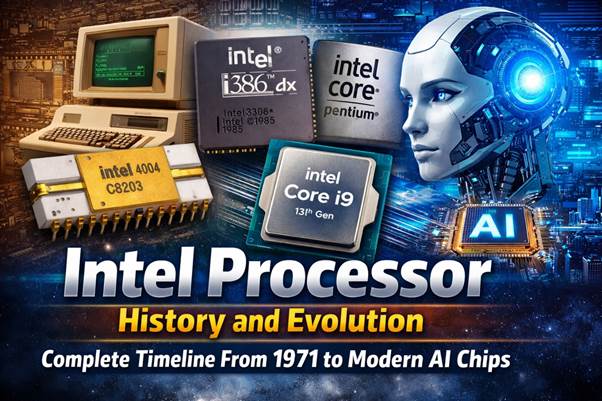

Intel Processor History and Evolution – Complete Timeline From 1971 to Modern AI Chips (PART 1)

The story of Intel is closely tied to the development of modern computing. From the earliest microprocessors used in calculators to today’s powerful chips running Artificial Intelligence, data centers, and personal computers, Intel has played a central role in shaping the digital world.

Modern computers, smartphones, and servers rely on highly advanced processors that can perform billions of calculations per second. These chips power operating systems such as Windows, Linux, Android, and MacOS. They also enable complex workloads including programming, encryption systems for protecting a password, rendering PDF documents, and running large cloud computing platforms used by companies such as Google, Oracle, and HP.

To understand how modern computers evolved, it is important to explore the history of Intel processors and the engineers, companies, and innovations that shaped the industry. The development of CPUs also influenced hardware ecosystems that include Ram, storage technologies such as floppy disks and USB drives, and modern semiconductor devices produced by companies like Samsung, Sony, and Motorola.

This article provides a complete historical overview of Intel’s journey—from the founding of the company in 1968 to the latest generation of hybrid processors designed for Artificial Intelligence and high-performance computing.

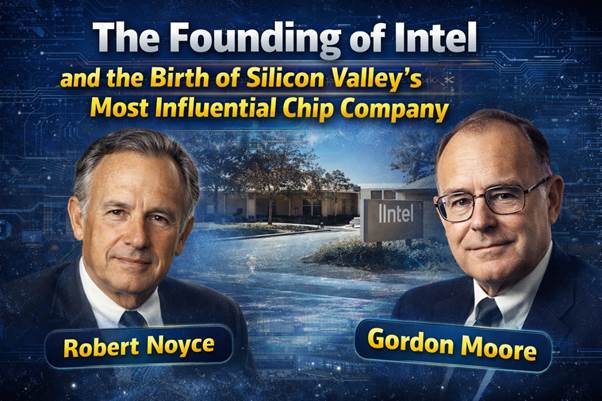

The Founding of Intel and the Birth of Silicon Valley’s Most Influential Chip Company

Robert Noyce, Gordon Moore, and the Creation of Intel

The origins of Intel trace back to the late 1960s, a time when the semiconductor industry was still in its early stages. Two influential engineers—Robert Noyce and Gordon Moore—played a crucial role in shaping modern microelectronics.

Both scientists previously worked at Fairchild Semiconductor, one of the pioneering companies of Silicon Valley. At Fairchild, they helped develop integrated circuits that dramatically reduced the size and cost of electronic components.

However, by 1968 they decided to leave the company and start a new venture focused entirely on semiconductor innovation. Their goal was to create faster, more reliable chips that could power future computing systems.

Venture Capital and the First Intel Funding

Launching a semiconductor company required substantial financial investment. The founders secured funding from venture capitalist Arthur Rock, who helped raise approximately $2.5 million for the startup.

The company was initially named NM Electronics, but the founders soon changed it to Intel, short for Integrated Electronics. This name reflected the company’s mission to build advanced integrated circuits that combined multiple electronic components on a single chip.

Intel established its first headquarters in Mountain View, California, in the heart of what would soon become known as Silicon Valley.

Why Intel Focused on Semiconductor Memory

During its early years, Intel concentrated primarily on producing semiconductor memory chips. At the time, computers relied heavily on magnetic core memory, which was expensive and difficult to scale.

Intel engineers developed dynamic random-access memory (DRAM) chips that were faster, smaller, and cheaper than previous memory technologies. These innovations helped computers become more compact and affordable.

Large technology companies such as IBM, HP, and software developers like Microsoft began adopting semiconductor memory in their systems. This transition laid the foundation for the personal computer revolution that would follow in the 1980s.

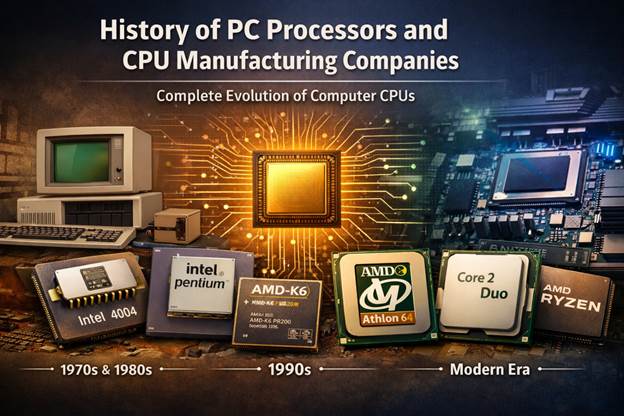

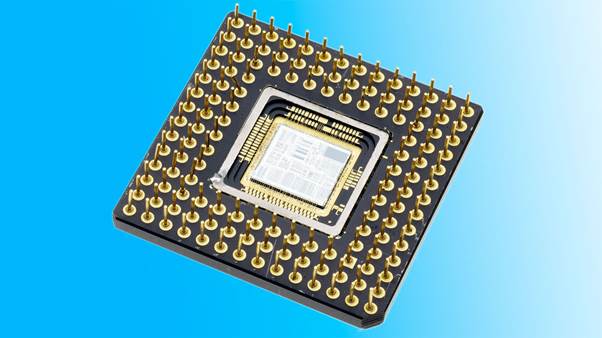

Intel 4004 – The First Commercial Microprocessor in History (1971)

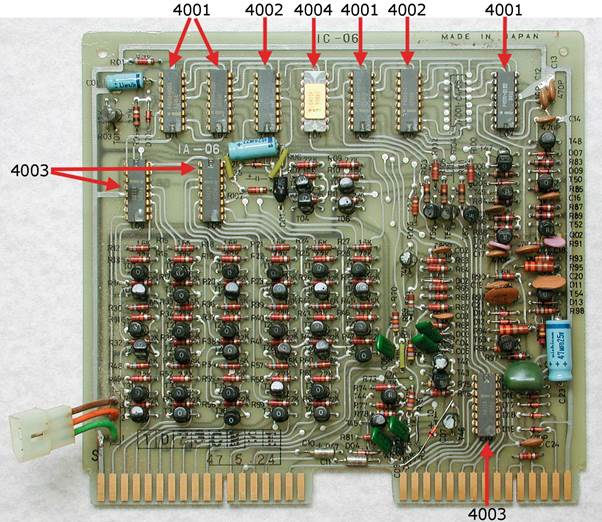

Busicom Calculator Project and the Birth of the CPU

In the late 1960s, a Japanese calculator manufacturer called Busicom approached Intel with a request to design custom chips for a new programmable calculator.

Instead of designing multiple specialized chips, Intel engineer Ted Hoff proposed a revolutionary idea: create a general-purpose processing unit that could perform different operations through software instructions.

This idea became the foundation for the world’s first commercial microprocessor.

The Engineers Behind the First Processor

Several engineers played critical roles in developing the Intel 4004 processor:

- Federico Faggin

- Ted Hoff

- Stan Mazor

- Masatoshi Shima

Federico Faggin was particularly important because he designed the silicon architecture that made the microprocessor possible.

Intel 4004 Technical Specifications

The Intel 4004, released in 1971, had the following specifications:

- 4-bit processor architecture

- Clock speed of approximately 740 kHz

- Around 2,300 transistors

- Fabricated using 10-micrometer technology

Although these numbers seem extremely small compared to modern processors containing billions of transistors, the Intel 4004 represented a revolutionary step in computing.

Why the 4004 Changed Computing Forever

Before the microprocessor, computers required multiple circuit boards filled with individual components. The Intel 4004 demonstrated that an entire CPU could be integrated into a single chip.

This breakthrough enabled the development of:

- Personal computers

- Embedded electronics

- Industrial control systems

- Early programming platforms

Over time, microprocessors became powerful enough to support modern technologies including Artificial Intelligence, high-speed cloud computing, and advanced operating systems.

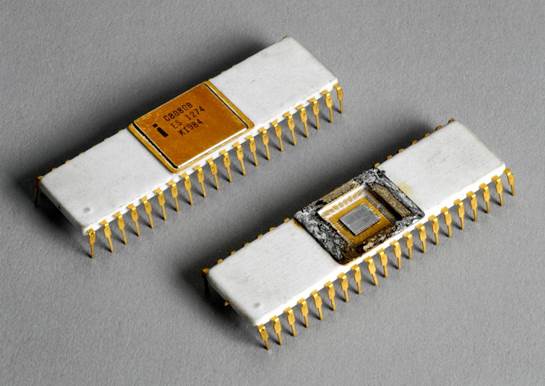

Early Intel Processor Development – 8008, 8080, and 8085

Intel 8008 – The First 8-Bit Intel CPU

In 1972, Intel introduced the 8008, the company’s first 8-bit processor. Compared with the 4-bit Intel 4004, the new chip could process more complex instructions and handle larger memory spaces.

The Intel 8008 contained approximately 3,500 transistors, representing a significant improvement over its predecessor. It could address up to 16 KB of memory, enabling developers to create more advanced software programs.

Although it was initially designed for terminal systems and embedded devices, the 8008 demonstrated that microprocessors could eventually power general-purpose computers.

Intel 8080 – The Processor That Powered Early Personal Computers

The Intel 8080, released in 1974, marked one of the most important milestones in computer history. It was significantly faster and more powerful than the 8008.

Key improvements included:

- Higher clock speeds

- Expanded instruction set

- Support for up to 64 KB of memory

The 8080 became the processor used in the famous Altair 8800, widely considered the first commercially successful personal computer kit.

The Altair 8800 inspired many early software developers, including the founders of Microsoft, who created the first BASIC interpreter for the machine.

Intel 8085 Improvements Over Previous CPUs

In 1976, Intel released the 8085, an improved version of the 8080. While the architecture was similar, the 8085 offered several technical enhancements.

First, the processor required fewer supporting chips, making computer systems simpler and cheaper to build. Second, it introduced improved electrical efficiency, which reduced power consumption and heat generation.

Another important improvement was its compatibility with existing 8080 software. Developers could run previously written programs without major modifications, making the transition to the newer processor relatively easy.

The Intel 8085 also became widely used in industrial equipment, laboratory devices, and early embedded systems.

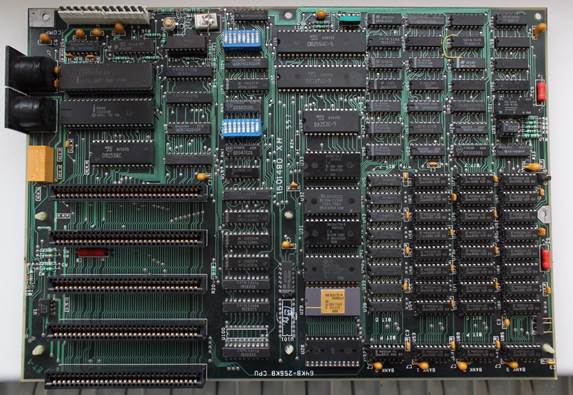

Birth of the x86 Architecture – Intel 8086 and 8088

Intel 8086 – The Start of x86 Architecture

In 1978, Intel introduced the 8086 processor, a chip that would eventually define the future of personal computing. The 8086 was the first processor built on what became known as the x86 architecture, a design that continues to power most desktop and laptop computers today.

The processor featured a 16-bit architecture, allowing it to process more data per instruction than the earlier 8-bit processors. It also supported 1 MB of addressable memory, which was a massive improvement at the time. These capabilities allowed developers to build more sophisticated applications and operating systems.

Another important innovation of the 8086 was its segmented memory model, which allowed programs to access larger memory spaces efficiently. This capability became essential for developing complex software environments and operating systems.

Compared with earlier chips like the 8085, the 8086 dramatically improved performance and flexibility. The instruction set was also expanded, making it easier for engineers to write advanced programming applications.

Because of these improvements, the 8086 became the foundation for future generations of Intel processors, and its instruction set still influences modern CPUs used in systems running Windows, Linux, and even virtualization environments in large cloud data centers.

Intel 8088 and the IBM PC Revolution

The Intel 8088, introduced in 1979, was closely related to the 8086 but had an important difference: it used an 8-bit external data bus while maintaining a 16-bit internal architecture.

This design made the processor cheaper and easier to integrate into computer systems. As a result, IBM selected the Intel 8088 for its groundbreaking IBM PC 5150, released in 1981.

This decision changed the computer industry forever.

The IBM PC quickly became the standard platform for personal computing, and its open architecture allowed other manufacturers to produce compatible machines. Software developers began creating applications specifically for the IBM PC platform.

Operating systems from Microsoft, including MS-DOS and later Windows, were designed to run on Intel processors. This powerful combination of Intel hardware and Microsoft software became known as the “Wintel" ecosystem, which dominated the PC market for decades.

Intel 80286 and the Rise of Protected Mode Computing

Major Innovations Introduced by 80286

The Intel 80286, commonly called the 286, was released in 1982 and represented a major leap forward in processor design.

One of the most important features introduced by the 286 was protected mode, a memory management system that allowed operating systems to isolate programs from one another. This prevented software crashes from affecting the entire system.

The processor also supported 16 MB of memory, which was significantly more than previous Intel processors. This improvement allowed software developers to create more complex applications and multitasking environments.

The 286 became the main processor used in the IBM PC AT, one of the most successful personal computers of the 1980s.

Difference Between 8086 and 80286

Compared with the 8086, the 286 introduced several important improvements:

• much larger memory addressing capabilities

• protected memory architecture

• improved performance and clock speeds

These improvements made it possible to run more advanced operating systems and applications.

For example, early graphical environments that later evolved into Windows required more memory and processing power than earlier command-line systems.

Intel 80386 – The Beginning of Modern 32-bit Computing

Full 32-bit Architecture

The Intel 80386, released in 1985, was the company’s first 32-bit processor. This new architecture allowed computers to process significantly larger data values and access much larger memory spaces.

The processor could address up to 4 GB of memory, which was an enormous capacity at the time.

This capability made the 386 ideal for advanced computing tasks and professional applications.

Virtual Memory and Multitasking

Another groundbreaking feature of the 386 was virtual memory support. Virtual memory allowed computers to simulate additional memory by using storage devices such as hard drives.

Although early systems sometimes used floppy disks for temporary storage, hard drives soon became the standard.

This feature allowed multiple applications to run simultaneously, improving multitasking capabilities.

Why the 386 Was Important for Windows and Linux

The 386 processor became widely adopted by operating systems such as Windows and Linux.

For example, Windows 3.0 required the capabilities of a 386 processor to run advanced graphical interfaces. Meanwhile, the early versions of the Linux kernel were designed to take advantage of the 386’s protected memory architecture.

This processor therefore played a critical role in establishing modern operating system ecosystems.

Intel 80486 – The First Highly Integrated CPU

Built-In Floating Point Unit

Released in 1989, the Intel 80486 introduced several innovations that significantly improved CPU performance.

One major change was the integration of the floating-point unit (FPU) directly into the processor. Previously, this component required a separate chip.

The built-in FPU dramatically improved performance in mathematical and scientific calculations.

Integrated Cache Memory

Another major improvement was the inclusion of on-chip cache memory. Cache memory stores frequently used data close to the processor, reducing the time needed to retrieve it.

This design made the 486 significantly faster than the 386.

Performance Improvements vs Intel 386

Compared with the 386, the 486 provided:

• faster instruction execution

• integrated floating-point processing

• improved cache memory design

These improvements enabled faster applications such as advanced programming tools, graphics software, and early network applications.

The Pentium Era – Superscalar Processor Revolution

Pentium (1993)

In 1993, Intel introduced the Pentium processor, marking a major shift in CPU design. The Pentium featured a superscalar architecture, allowing it to execute multiple instructions simultaneously.

This dramatically improved performance compared with the 486.

Pentium Pro (1995)

The Pentium Pro introduced advanced features such as out-of-order execution, which allowed the processor to reorganize instructions for better performance.

This made the Pentium Pro ideal for servers and professional workstations.

Pentium II (1997)

The Pentium II introduced MMX multimedia instructions, improving performance in graphics and audio applications.

This made personal computers better suited for entertainment and multimedia.

Pentium III (1999)

The Pentium III expanded multimedia performance further with SSE instructions, which accelerated graphics processing and scientific computing.

These processors powered millions of computers running Windows during the late 1990s.

Pentium 4 and the Race for Clock Speed

The Pentium 4, released in 2000, focused on achieving extremely high clock speeds.

Intel designed the processor using the NetBurst architecture, which allowed clock speeds to reach several gigahertz.

Although this design increased raw processing speed, it also generated significant heat and required powerful cooling systems.

This led Intel engineers to rethink processor design strategies.

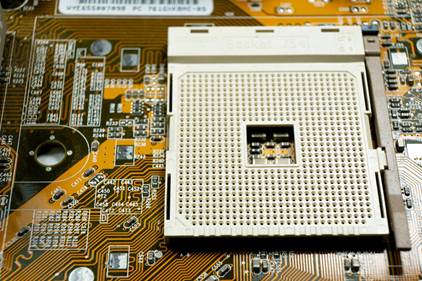

The Multi-Core Revolution – Intel Core Architecture

Core Duo and Core 2 Duo

In 2006, Intel introduced the Core architecture, which replaced the Pentium 4 design.

Instead of focusing on clock speed, the Core architecture focused on energy efficiency and multi-core processing.

The Core 2 Duo processors featured two processing cores on a single chip, allowing computers to run multiple applications simultaneously.

Nehalem Architecture

The Nehalem architecture, introduced in 2008, integrated the memory controller directly into the processor.

This significantly improved communication between the CPU and Ram, resulting in faster performance.

Sandy Bridge and Ivy Bridge

Later generations such as Sandy Bridge (2011) and Ivy Bridge (2012) integrated graphics processing units (GPUs) into the CPU.

This allowed computers to display high-quality graphics without requiring dedicated graphics cards.

Haswell and Skylake Improvements

Further improvements introduced better power efficiency, making Intel processors suitable for laptops and mobile devices.

Modern Intel Processors – Hybrid CPUs and AI Computing

Modern Intel processors such as Alder Lake and Raptor Lake use hybrid architectures that combine:

• performance cores

• efficiency cores

This design allows processors to handle both high-performance workloads and energy-efficient background tasks.

These processors power modern computers running Windows, Linux, and advanced enterprise software platforms.

They are also used in data centers that support cloud computing services used by companies like Google and Oracle.

Another important feature of modern CPUs is Artificial Intelligence acceleration, which enables machine learning applications and real-time data analysis.

Intel’s Influence on Modern Technology

Intel technology plays a major role in modern computing infrastructure.

Many servers running cloud platforms rely on Intel processors to handle massive workloads. These systems support services such as:

• web hosting

• artificial intelligence research

• big data processing

Intel processors also power computers used for programming, document processing including PDF editing, and security systems that protect sensitive data using encryption and password authentication.

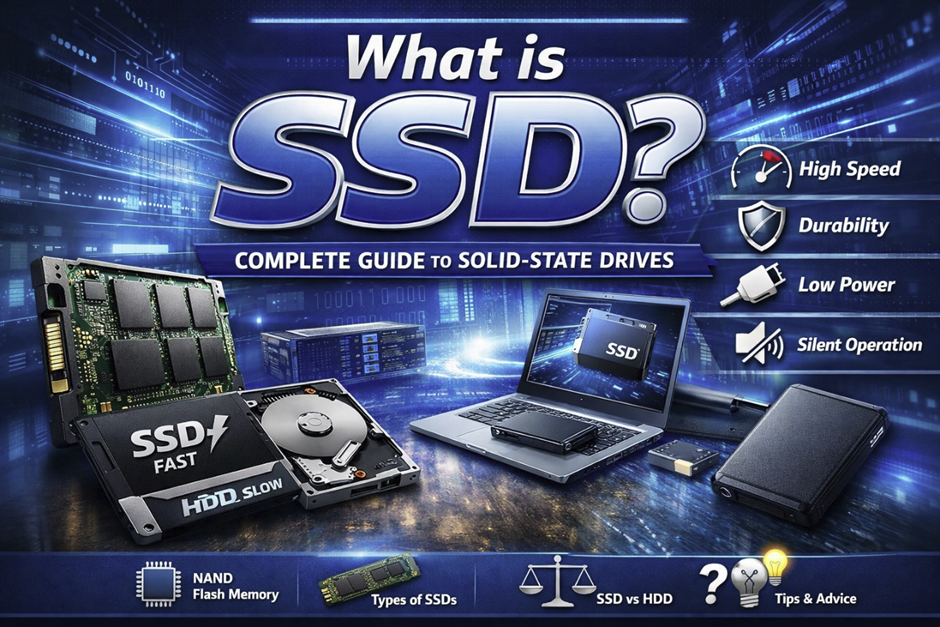

Storage devices evolved alongside processors—from early floppy disks to modern USB drives and solid-state storage systems.

Competition That Shaped Intel Innovation

Intel has faced competition from several technology companies.

The most significant rival is AMD, which develops competing processors for personal computers and servers.

Meanwhile, mobile processors produced by companies such as Samsung, Sony, and Motorola rely on ARM architectures rather than x86 designs.

This competition has pushed Intel to continue improving processor performance and efficiency.

Conclusion – Why Intel Still Dominates Processor Technology

For more than five decades, Intel has played a central role in the evolution of computing technology. From the first microprocessor in 1971 to modern hybrid CPUs designed for Artificial Intelligence, the company has continually advanced semiconductor innovation.

Today, Intel processors power millions of devices worldwide—from personal computers and servers to industrial systems and research laboratories.

The legacy of Intel’s engineers and innovations continues to shape the future of computing, ensuring that the evolution of processors remains at the heart of modern digital technology.